-

Notifications

You must be signed in to change notification settings - Fork 1.8k

Description

pycaret version checks

-

I have checked that this issue has not already been reported here.

-

I have confirmed this bug exists on the latest version of pycaret.

-

I have confirmed this bug exists on the master branch of pycaret (pip install -U git+https://github.com/pycaret/pycaret.git@master).

Issue Description

Similar issue to those identified in #3351.

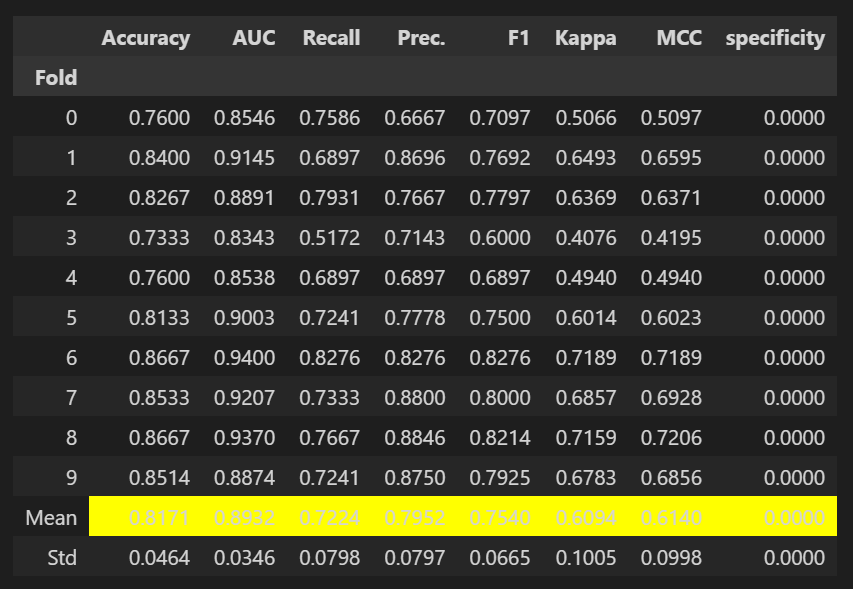

Specificity is just the recall for the negative class in a binary classification task. Therefore we just return scikit-learn's recall function where the pos_label is 0. We utilize the zero_division parameter to return 1 if there is a zero_division error. It is worth noting that the default behavior of scikit-learn's recall function sets zero_division = 'warn' which is equivalent to zero_division = 0.

Reproducible Example

from pycaret.datasets import get_data

from sklearn.metrics import recall_score

data = get_data('juice')

from pycaret.classification import *

s = setup(data, target = 'Purchase', session_id = 123)

# create a custom function

def specificity(y_true, y_pred):

return recall_score(y_true

, y_pred

, pos_label=0

, zero_division=1)

# add metric to PyCaret

add_metric('specificity', 'specificity', specificity, greater_is_better = True)

lr = create_model('lr')Expected Behavior

Expected behavior would be that specificity is correctly calculated.

Actual Results

Actual results are that all zeros are returned.